Tutorial: Get started with CPU profiling

Sometimes your app works, but you want to increase performance by boosting its throughput or reducing latency. Other times, you just want to know how your code behaves at runtime, determine where the hot spots are, or figure out how a framework operates under the hood.

Partly, you can get this information at design time, as IntelliJ IDEA provides you with all sorts of clues that can be produced statically. Of course, you cannot learn about all the bugs and inefficiencies by just looking at the code, because things get more complicated at runtime. Verifying any guesses you have can prove to be a tough task without the right tools at hand.

The profiler offers a bird’s eye view of arbitrarily large execution chunks, which helps you see problems at scale. In this tutorial, we are going to take a look at the capabilities of IntelliJ Profiler.

The example application

Let’s say we have the following application: https://github.com/flounder4130/profiler-example.

The program repeatedly tries to create a path in the file system using createDirectories() from NIO2. Then we measure the throughput using an improvised benchmark.

Every time a task runs, the benchmark logic stores the current timestamp to a collection and removes all timestamps that point to a time earlier than the current time minus some interval. This makes it possible to find out how many events occurred during this time interval by just querying the collection. This benchmark is supposed to help us evaluate the performance of the createDirectories() method.

When we run the program, we find that the figures are suspiciously low:

Let’s profile it and see what’s wrong.

Run with profiler

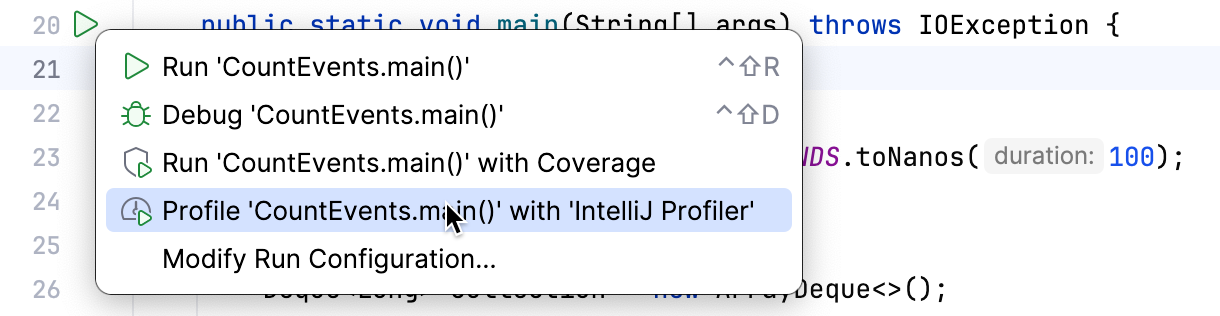

Click

near the entry point of the application – the

CountEventsclass in our case.From the menu, select Profile CountEvents.main() with IntelliJ Profiler.

When the app has finished running, a popup will appear, prompting us to open the report. If we dismiss the popup by mistake, the report will still be available in the Profiler tool window.

Let's open the report by clicking the popup and see what's inside.

Analyze the profile

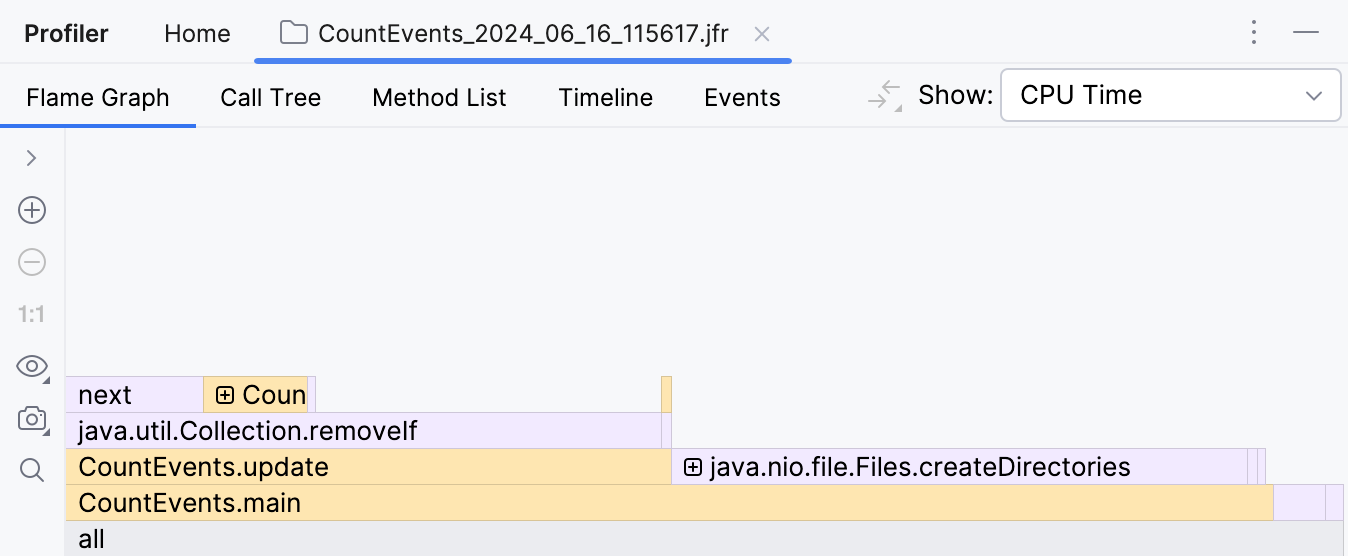

The first thing we see after opening the report is the flame graph. The data on the flame graph is essentially a summary of all sampled stacks. The more samples with the same stack the profiler collects, the wider this stack grows on the flame graph. So, the width of the frame is roughly equivalent to the share of time spent in this state.

To our surprise, the createDirectory() method did not account for the most execution time. Our homemade benchmark took about the same amount of time to execute! Furthermore, if we look one frame above, we see that this is primarily because of the removeIf() method, which accounts for almost all the time of its parent method, update().

This clearly needs some looking into.

Optimize the code and verify the results

Seems like the code responsible for removing events from the queue is doing extra work.

Since we’re using an ordered collection, and events are added in chronological order, we can be sure that all elements subject for removal are always at the head of the queue. If we replace removeIf() with a loop that breaks once it starts iterating over events that it is not going to remove, we can potentially improve performance:

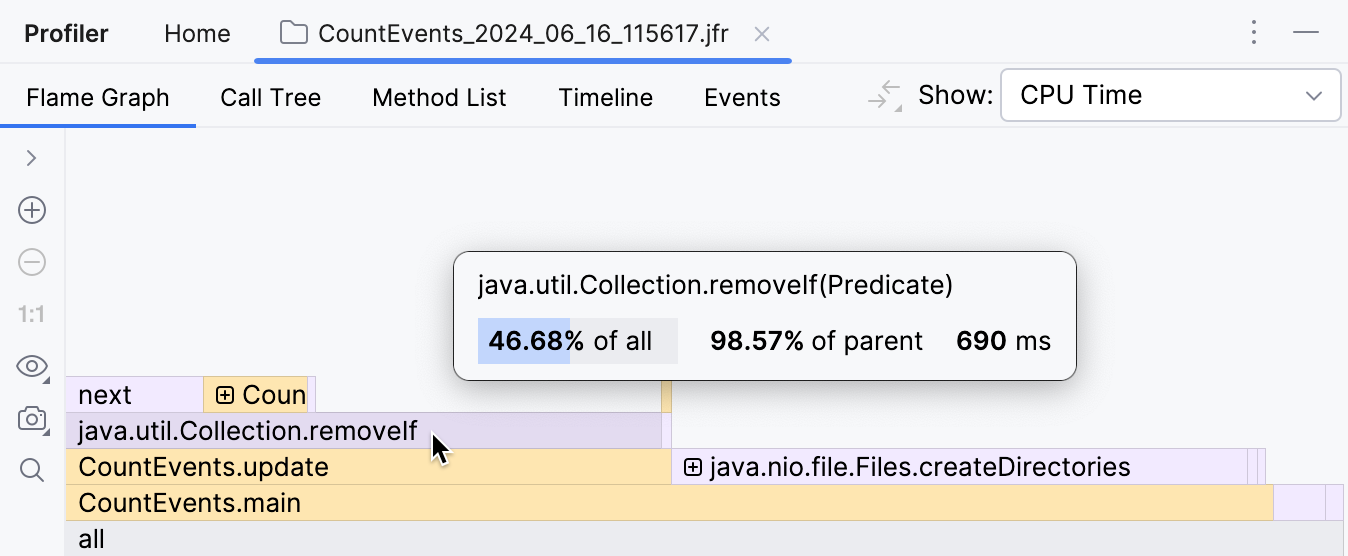

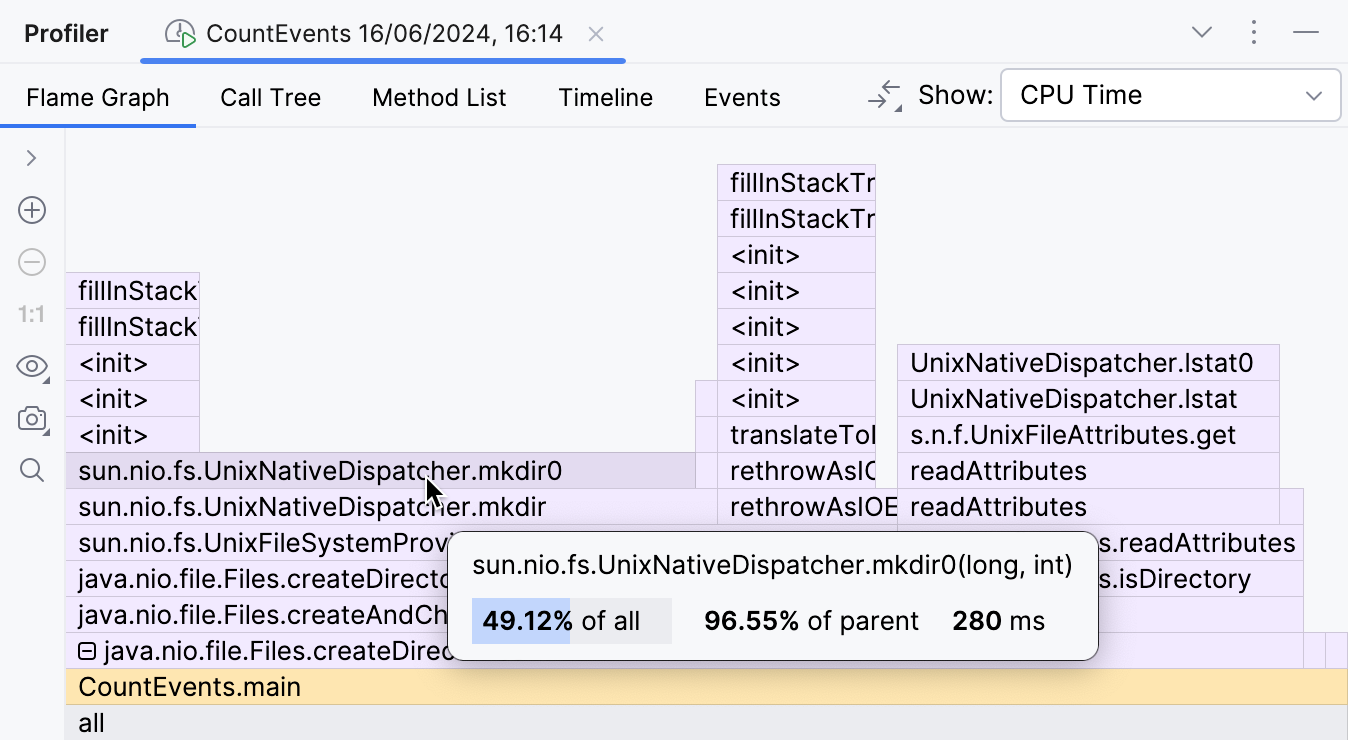

Let’s change the code, then profile our app once again and look at the result:

The overhead from the benchmark logic is now minimal as it should be, and the createDirectories() frame now occupies approximately 95% of the entire execution time.

The improvement is also visible in the console output:

Further optimizations

Having solved the problem in the benchmark, we could stop here and pat ourselves on the back. But what’s going on with our createDirectories() method? Is it too slow?

There seems little room for optimization here, since this is a library method, and we don't have control over its implementation. Still, the profiler can give us a hint.

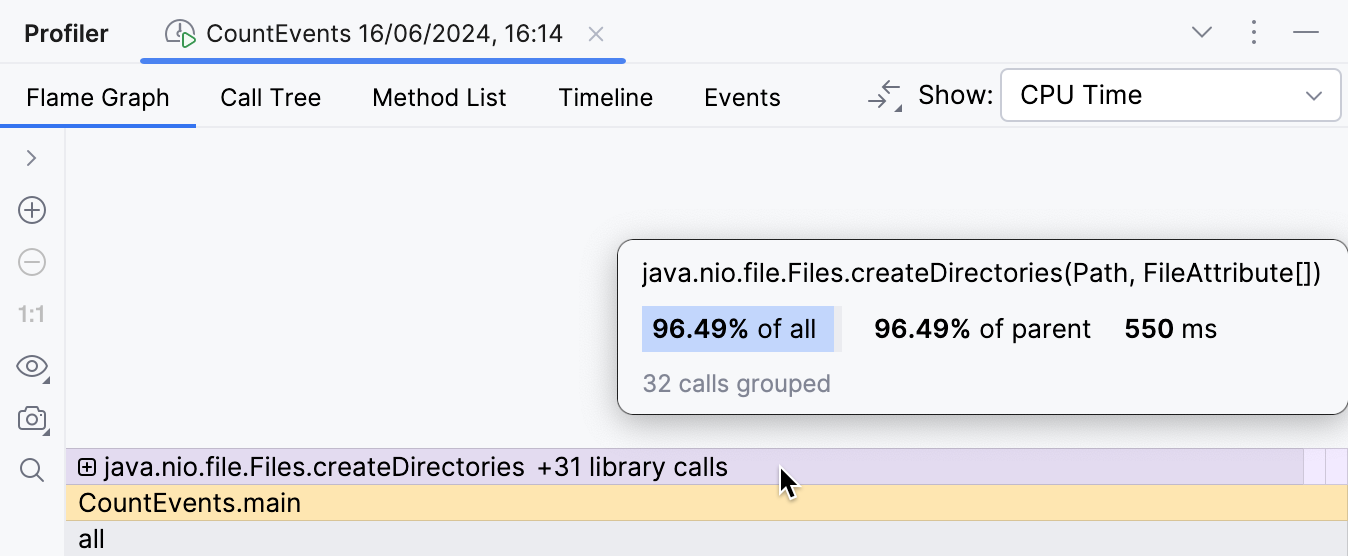

By expanding the folded library frames, we can inspect what's happening inside:

This part of the flame graph shows that the main contributor to the execution time of createDirectories() is sun.nio.fs.UnixNativeDispatcher.mkdir0. A large portion of this frame has nothing on top of it. This is referred to as method's self-time and indicates that there is no Java code beyond that point. There might be native code, though.

Since we are trying to create a directory, which requires calling the operating system's API, we might expect mkdir's self-time to be dominated by native calls. UnixNativeDispatcher in the class name seems to confirm that.

We'll need native stacks to find this out:

Include native samples in the snapshot

Press Ctrl+Alt+S to open settings and then select . Select the Collect native calls checkbox.

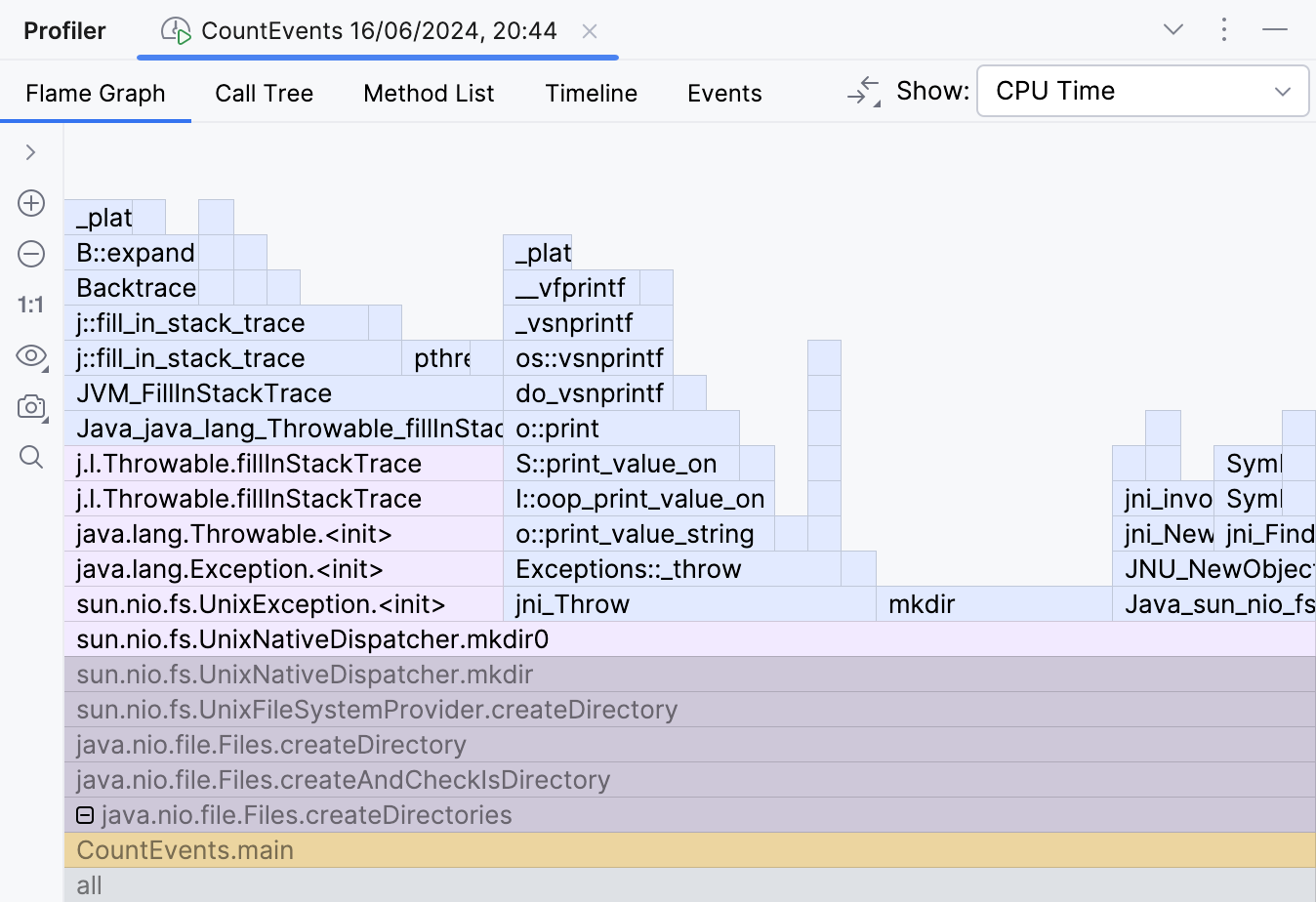

Rerunning the application with native profiling enabled shows us the full picture.

Here's the catch. The documentation for the createDirectories() method says:

The description is valid from the Java perspective, as it doesn't raise any Java exceptions. However, when attempting to create a directory that already exists, exceptions are thrown at the operating system level. This leads to a significant waste of resources in our scenario.

Let’s try to avoid this and wrap the call to createDirectories() in a Files.exists() check:

Our code is lightning fast now:

It is now about 16 times faster than it originally was. This exception handling was really expensive! The results may differ depending on the hardware and the environment, but they should be impressive anyway.

Snapshots' diff

Actually, not only can you compare benchmark figures, but it is possible to compare the flame graphs themselves.

Open two snapshots for comparison

With the newer snapshot open, click Compare With Baseline in the right-hand part of the toolbar, then select the older snapshot.

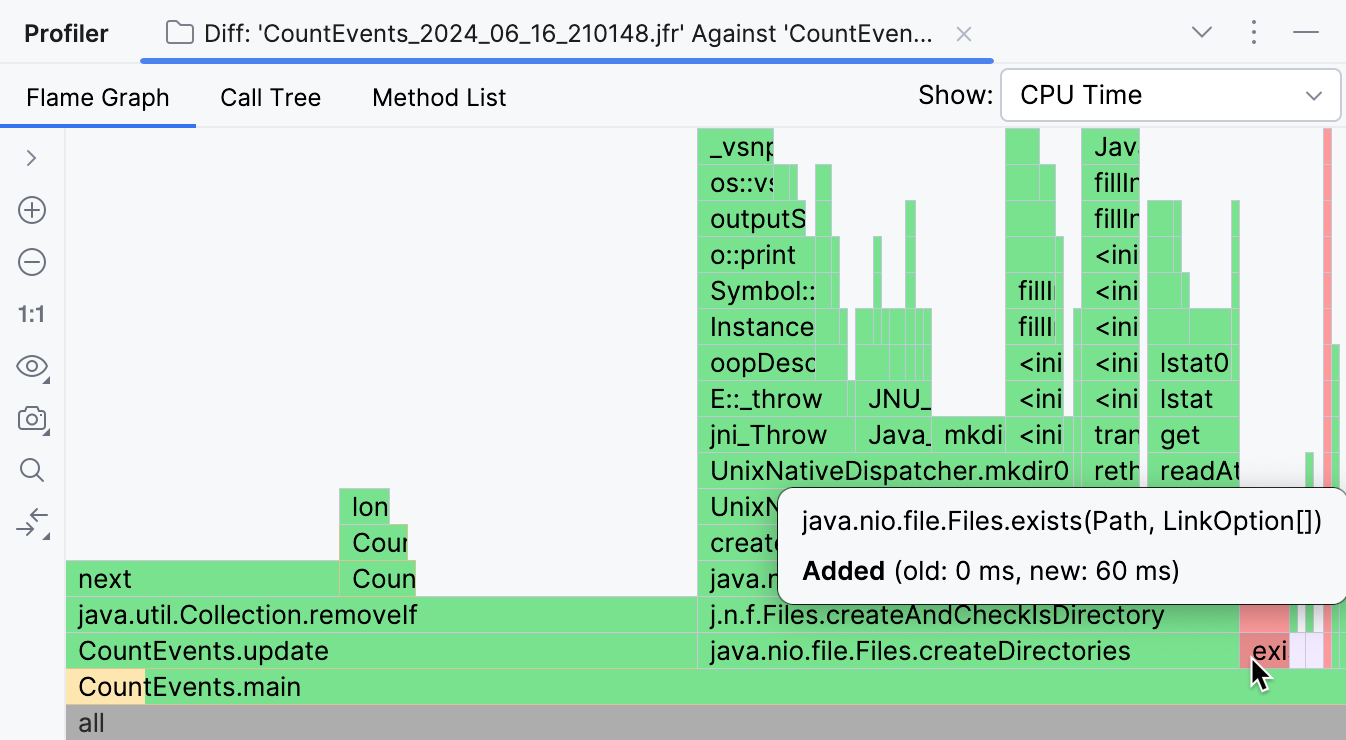

Here is the result of comparing the original snapshot with the one we got after we introduced the optimizations described in the previous chapters:

Frames missing from the newer snapshot are highlighted in green, while the new ones are colored red. If a frame has several colors, this means the frame is present in both snapshots, but its overall runtime has changed. As visible in the screenshot above, the vast majority of operations originally performed by our program were unnecessary, and we were able to eliminate them.

CountEvents.update() is completely green, which means our first change resulted in near-complete improvement in the method's runtime. Adding Files.exists() was not a 100% improvement, but it effectively removed createDirectories() from the snapshot, only adding 60 ms to the program runtime.

Summary

In this tutorial, we used a profiler to detect and fix a performance problem. We also witnessed how even a well-known API may have implications that seriously affect the execution times of a Java program. This shows us why profiling is a useful skill even if you are not optimizing for millions of transactions per second.

The tutorial is far from exhaustive: IntelliJ IDEA provides a lot of ways to analyze the profiler report, and the flame graph is only one of them. The range of cases where the profiler may be useful is also immense. If you want to learn more approaches and get more profound knowledge on IntelliJ IDEA, check out the other topics in this section.