Hadoop monitoring

Professional feature: download PyCharm Professional to try.

With the Big Data Tools plugin you can monitor your Hadoop applications.

Typical workflow:

Create a connection to a Hadoop server

In the Big Data Tools window, click

and select Hadoop under the Monitoring section.

The Big Data Tools Connection dialog opens.

Mandatory parameters:

URL: the path to the target server.

Name: the name of the connection to distinguish it between the other connections.

Optionally, you can set up:

Enable tunneling. Creates an SSH tunnel to the remote host. It can be useful if the target server is in a private network but an SSH connection to the host in the network is available.

Select the checkbox and specify a configuration of an SSH connection (click ... to create a new SSH configuration).

Per project: select to enable these connection settings only for the current project. Deselect it if you want this connection to be visible in other projects.

Enable connection: deselect if, for some reasons, you want to restrict using this connection. By default, the newly created connections are enabled.

Enable HTTP basic authentication: connection with the HTTP authentication using the specified username and password.

Enable HTTP proxy: connection with the HTTP proxy using the specified host, port, username, and password.

HTTP Proxy: connection with the HTTP or SOCKS Proxy authentication. Select if you want to use IDEA HTTP Proxy settings or use custom settings with the specified host name, port, login, and password.

Kerberos authentication settings: opens the Kerberos authentication settings.

Specify the following options:

Enable Kerberos auth: select to use the Kerberos authentication protocol.

Krb5 config file: a file that contains Kerberos configuration information.

JAAS login config file: a file that consists of one or more entries, each specifying which underlying authentication technology should be used for a particular application or applications.

Use subject credentials only: allows the mechanism to obtain credentials from some vendor-specific location. Select this checkbox and provide the username and password.

To include additional login information into PyCharm log, select the Kerberos debug logging and JGSS debug logging.

Note that the Kerberos settings are effective for all you Spark connections.

You can also reuse any of the existing Spark connections. Just select it from the Spark Monitoring list.

Once you fill in the settings, click Test connection to ensure that all configuration parameters are correct. Then click OK.

At any time, you can open the connection settings in one of the following ways:

Go to the Tools | Big Data Tools Settings page of the IDE settings Ctrl+Alt+S.

Click

on the Hadoop monitoring tool window toolbar.

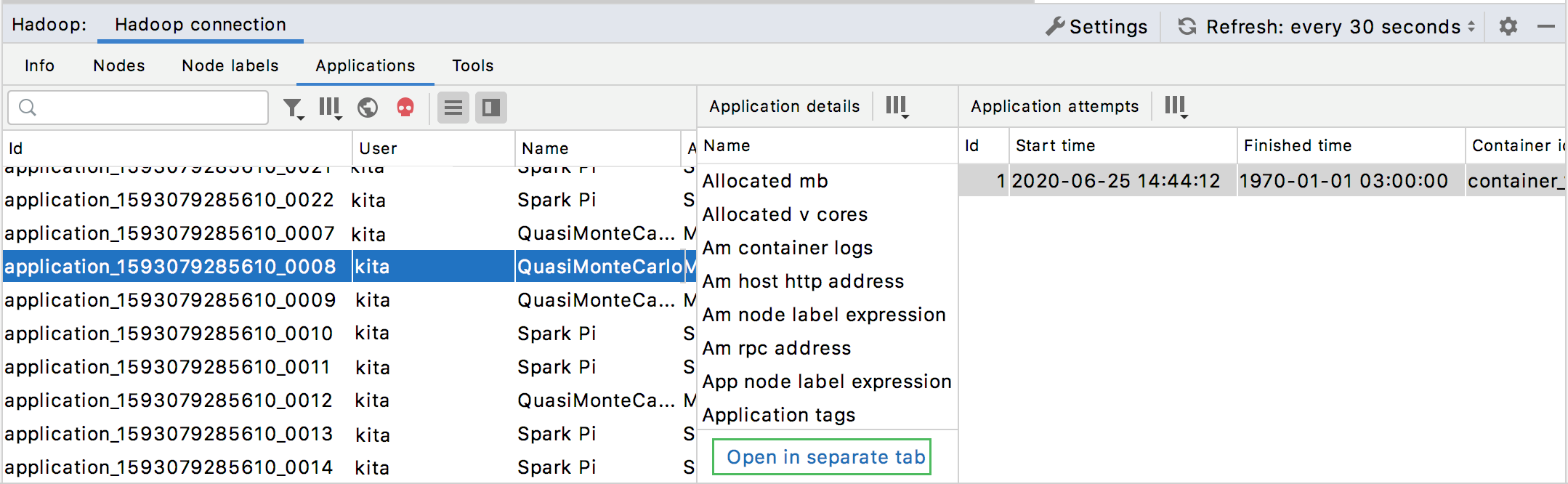

Once you have established a connection to the Hadoop server, the Hadoop monitoring tool window appears. It consists of the several areas to monitor data for:

The detailed information about the cluster metrics and resources powered by the Resource Manager.

Refer to Hadoop documentation for more information about types of data.

Adjust layout

In the list of the applications, select one to study.

To manage visibility of the monitoring areas, use the following buttons:

Item

Description

Shows the list of execution attempts.

Shows application details.

To focus on a particualr application, click the Open in a separate tab link in the Application details monitoring area.

The application details will be shown in a separate tab.

Click

to preview any monitoring data in a browser.

Once you have set up the layout of the monitoring window, opened or closed some preview areas, you can filter the monitoring data to preview particular parameters.

Filter out the monitoring data

Use the filter button (

) in the monitoring tabs to show details for applications with specific status. Select the specific application statuses you want to monitor.

You can also filter the list of applications by a username, start time, and end time. Besides, you can specify the limit of the items in the filtered list.

Manage content within a table:

Click a column header to change the order of data in the column.

Click Show/Hide columns on the toolbar to select the columns to be shown in the table:

At any time, you can click on the Hadoop monitoring tool window to manually refresh the monitoring data. Alternatively, you can configure the automatic update within a certain time interval in the list located next to the Refresh button. You can select 5, 10, or 30 seconds.